🧊 PolarQuant Q5 -- Qwen3.5-27B

The full 27B Qwen3.5 model compressed with PolarQuant Q5 -- PPL 5.37 (only +0.13 from BF16 5.24) with massive VRAM savings.

PolarQuant brings near-lossless 5-bit quantization to the larger Qwen3.5-27B model, enabling it to run on hardware that cannot fit the full FP16 model (56 GB).

🎯 Key Results

| Metric | Value |

|---|---|

| Method | PolarQuant Q5 + torchao INT4 |

| Perplexity (WikiText-2) | 5.37 |

| BF16 Baseline PPL | 5.24 |

| Delta from BF16 | +0.13 (near-lossless) |

| Original Size | ~56 GB (FP16) |

| Quantized Size | Significantly reduced |

| Quantization | PolarQuant Q5 (5-bit, block_size=128) |

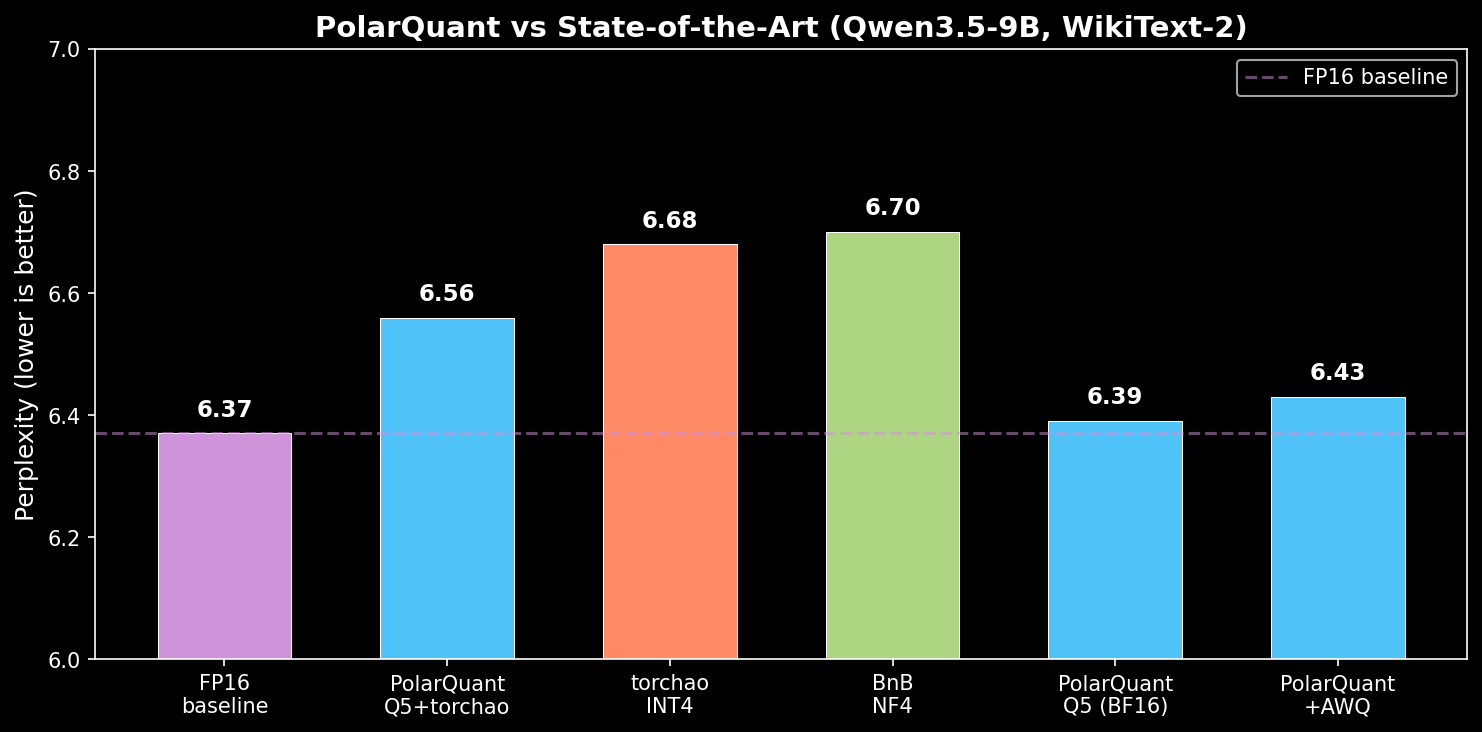

📊 Benchmark Comparison

| Method | PPL | Delta | Notes |

|---|---|---|---|

| BF16 baseline | 5.24 | -- | Full precision |

| PolarQuant Q5 + torchao | 5.37 | +0.13 | Near-lossless |

| torchao INT4 (absmax) | ~5.50 | +0.26 | Standard quantization |

The 27B model shows even better PolarQuant scaling than the 9B -- only +0.13 PPL degradation vs +0.19 for the 9B model. Larger models are more robust to quantization.

🔬 Why PolarQuant?

PolarQuant uses Hadamard rotation to transform weight distributions to Gaussian, then applies Lloyd-Max MSE-optimal centroids. This is mathematically optimal for the transformed distribution.

Original Weights --> Normalize --> Hadamard Rotate --> Lloyd-Max Quantize --> Store Codes

|

Inference: Codes --> Centroid Lookup --> Inverse Hadamard --> Scale by Norms --> BF16 Weights

|

torchao INT4 --> cuBLAS

Key Insight

Larger models benefit more from PolarQuant because:

- More parameters means better statistical convergence to Gaussian after rotation

- The Lloyd-Max centroids become increasingly optimal as block statistics stabilize

- Quantization error is distributed across more parameters, reducing per-token impact

🚀 Quick Start

CUDA (torchao INT4) -- Recommended

from transformers import AutoModelForCausalLM, AutoTokenizer

from torchao.quantization import quantize_, Int4WeightOnlyConfig

model = AutoModelForCausalLM.from_pretrained(

"caiovicentino1/Qwen3.5-27B-PolarQuant-Q5",

dtype="bfloat16", device_map="auto", trust_remote_code=True

)

quantize_(model, Int4WeightOnlyConfig(group_size=128))

tokenizer = AutoTokenizer.from_pretrained("caiovicentino1/Qwen3.5-27B-PolarQuant-Q5")

output = model.generate(

**tokenizer("Explain the implications of quantum entanglement:", return_tensors="pt").to("cuda"),

max_new_tokens=300

)

print(tokenizer.decode(output[0], skip_special_tokens=True))

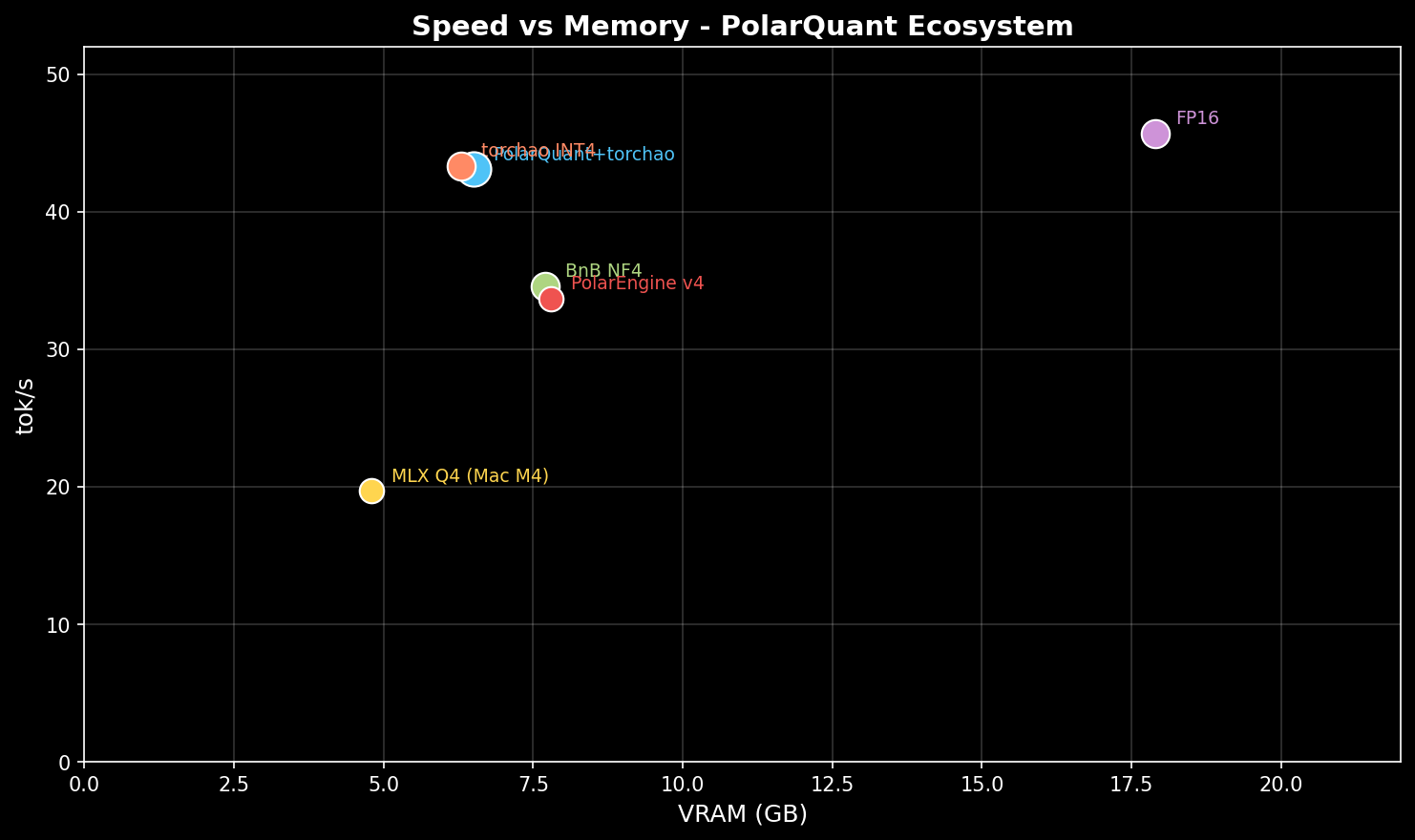

🖥️ Hardware Requirements

| Configuration | VRAM Required | Notes |

|---|---|---|

| FP16 | ~56 GB | A100 80GB / H100 |

| PolarQuant Q5 + torchao INT4 | ~18-20 GB | RTX 4090 / A6000 / RTX PRO 6000 |

| Multi-GPU (2x) | ~10 GB each | 2x RTX 3090/4090 |

Tip: Qwen3.5-27B uses the DeltaNet architecture with

flash-linear-attentionfor efficient inference. Ensure you havetrust_remote_code=Trueand the latest transformers version.

🔧 Technical Details

| Component | Details |

|---|---|

| Base Model | Qwen3.5-27B (DeltaNet architecture) |

| Quantization | PolarQuant Q5 (5-bit, block_size=128) |

| Rotation | 128x128 normalized Walsh-Hadamard matrix |

| Centroids | Pre-computed MSE-optimal for N(0,1) via 100 Lloyd-Max iterations |

| Storage | int8 codes + fp16 per-block norms + fp32 centroid table |

| Inference | torchao INT4 cuBLAS (group_size=128) |

| Architecture | DeltaNet (stateful, uses flash-linear-attention) |

DeltaNet Notes

Qwen3.5 uses DeltaNet (linear attention with delta rule), which is stateful:

- Requires

flash-linear-attentionfor efficient inference - Speculative decoding is not currently supported

- Use

trust_remote_code=Truewhen loading

🔗 Links

- \U0001f4c4 Paper (arXiv) -- PolarQuant: Optimal Gaussian Weight Quantization

- 💻 Code (GitHub) -- Full research codebase

- \U0001f50c vLLM Plugin -- Production inference integration

- 🧊 9B Version -- Smaller model, full benchmarks

📖 Citation

@article{vicentino2026polarquant,

title={PolarQuant: Optimal Gaussian Weight Quantization via Hadamard Rotation for LLM Compression},

author={Vicentino, Caio},

journal={arXiv preprint arXiv:2603.7424577},

year={2026}

}

🙏 Acknowledgements

Built with PyTorch, torchao, flash-linear-attention, and the Qwen team's open-weight models.

- Downloads last month

- 53

Model tree for caiovicentino1/Qwen3.5-27B-PolarQuant-Q5

Base model

Qwen/Qwen3.5-27B